The Machines Have Already Won

What thirty years of chess history can teach us about the future of generative AI.

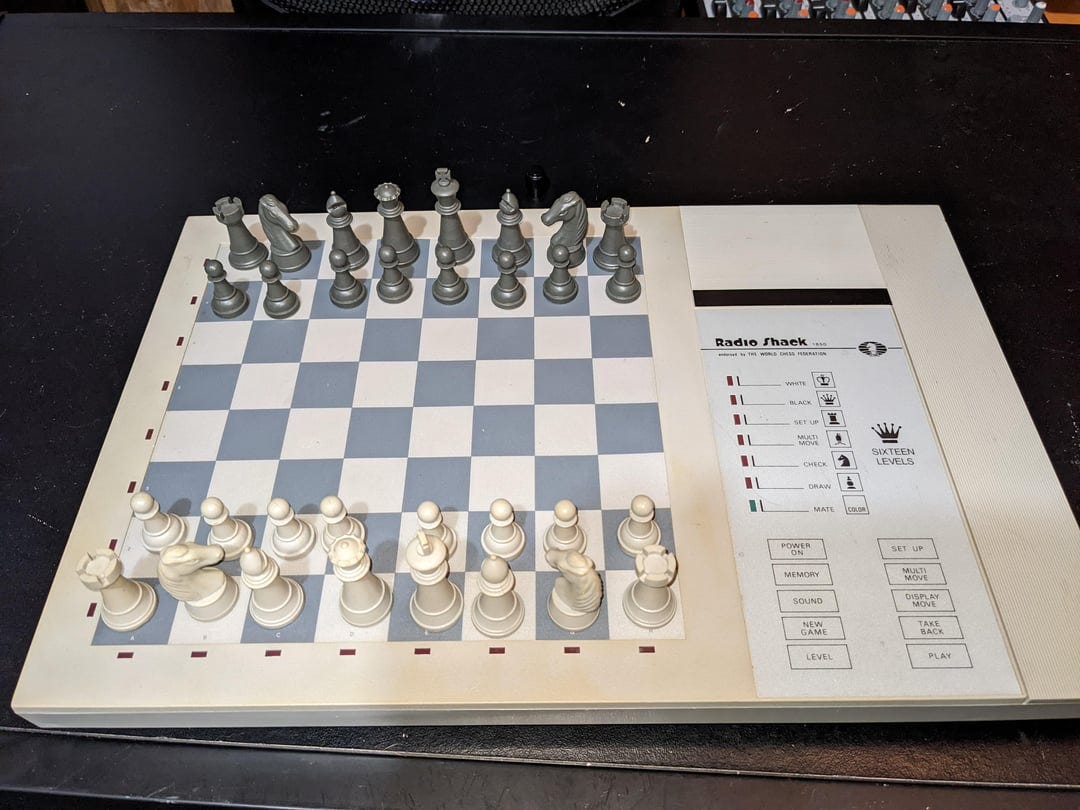

Roughly thirty years ago my parents bought me a chess computer, one of those electronic boards where you had to press the squares to input your moves and then follow the glowing red lights that indicated the computer’s reply. I was not a very strong chess player, but neither was the computer; we were well suited for one another and played a few hundred games together that taught me a lot about chess.

This wasn’t exactly cutting edge technology, but the chess world didn’t have long to wait. In 1996 Garry Kasparov lost a game to IBM’s Deep Blue, in 1997 he lost to it in a six game match. Then the chess engine arms race took off: Fritz, Rybka, Stockfish, Leela. In my adult years I’ve lost games to smart phones and airplane consoles. I’ve given up playing against chess engines because I know I’m going to lose, often in the first 25 moves, and it’s not just me. It’s been a long time since any human being could give a computer a good fight.

It’s this history of being dominated by computers that gives chess players a unique insight into a future defined in part by our use of generative AI. I’m writing this post with some trepidation. I’m not a computer scientist, and I don’t claim to understand the mechanism by which machines play chess or write an essay1. But as a high school history teacher and chess player, I have a lot of experience with how people interact with machines that can imitate aspects of human intelligence at a high level2. With that in mind, I made a list of how computers have changed chess and considered the roughly equivalent ways that generative AI will—and already is—changing how we learn.

Information and Trust

Most of the chess books I owned in the 90s made ample use of the unclear symbol, aka the infinity sign, for the simple reason that chess is almost infinitely large and there are a lot of positions that are too difficult for humans to evaluate accurately. I’m not sure exactly when the unclear symbol met its demise, but it’s irrelevant to chess analysis and annotations of the present because chess engines can evaluate any position with an extraordinary degree of accuracy. I trust computer evaluations of chess positions implicitly because I lack the expertise and ability to prove them wrong: the only thing that can do that is a stronger chess engine.

This suggests a future for generative AI that cuts against the grain of my luddite instincts. AI powered search functions will become more accurate and precise over time, just as chess engines have over the past few decades, so much so that the information they provide will be widely accepted as true, a rebirth of a shared set of ideas about how the world works that has eroded in the age of social media. You can certainly make the argument that the chess engine analogy is problematic—bad actors will try to manipulate generative AI for political or financial gain in a way that never happened in the development of computer chess3—but I think there’s reason to be hopeful. The data set used by generative AI is enormous and thus challenging to manipulate. There will be a strong incentive for developers of AI to build trustworthy platforms that people want to use. And AI in its infancy, right here in 2024, is already pretty good about filtering good and bad information and explaining its reasoning4. After all, a chess engine evaluation isn’t worth much without a variation to support it.

Feedback, Practice, and Cutting Corners

Before chess engines the only realistic way to become a strong chess player was by getting a coach and buying a lot of books. This was easier to do in some places than in others. There’s a reason that a lot of kids born in the late 70s/early 80s in the Soviet Union became grandmasters, compared with the paucity of titled players from my generation in the United States.

One of the most important factors in learning of any kind is feedback: immediate, relevant, and descriptive. A coach can do this, but a chess engine can as well. When I play in a tournament, I feed the game into an engine to check for blunders, interesting ideas I might have missed, and whether or not my sense of who held the advantage matches the computer’s evaluation. The fact that I can do this immediately after a game and get feedback that I know is trustworthy (see the section above) is incredibly powerful, and a big part of the reason why I managed to keep improving at chess into my 30s.

Writing instruction in some ways is more complicated: depending on the genre of writing, current AI feedback will fall on a continuum from perfectly relevant to completely ridiculous. But a lot of writing the people do as adults is more functional than artistic, and generative AI should be able to provide targeted coaching for this kind of writing without too much trouble. You could also imagine an AI program generating practice writing prompts and then providing feedback in the same way that a chess player can use an engine as a sparring partner.

I don’t mean to suggest that the future of learning involves cutting out human teachers and coaches. Much of what I’ve learned about chess has come from other human beings, in the form of conversations, books, and competitive games. As a teacher I know that emotion and connection is key to learning—but also that there’s no good way for one teacher to provide immediate and specific feedback to 150 students.

Finally, chess engines and generative AI also have the tendency to undercut learning: they allow the learner to cut corners by having the machine do the thing that the learner should be struggling to accomplish. For chess, this looks like turning on the engine before I’ve done any of the analysis myself, chess board and notebook, the old fashioned way. For writing, this means having the AI write the thesis statement or fix the grammar without trying to do these things first by hand.

Cheating

One step beyond cutting corners is outright cheating, and if you think that cheating scandals in the chess world are bad, you probably haven’t spent much time reading student writing over the past two years. Generative AI is everywhere in high schools and colleges. Some cheaters, and most of those who get caught, are unsophisticated, copying and pasting AI output into a Google Doc and turning it in, the writing equivalent of playing the chess engine’s first choice every move as if it were your own. Just as I know that a 1400-rated player on the internet didn’t suddenly get lucky and play a perfect blitz game, I can tell when generative is used in place of real student work: high school kids don’t write like the Encyclopedia Brittanica. But despite chess.com’s anti-cheating measures and online AI checkers, proving that someone cheated can be hard to do, particularly when they do it with some level of sophistication.

The motivation of chess cheaters is obvious: chess is a competitive endeavor with rating points, money, and even international titles on the line. But education—and the grades that come with it—is also deeply competitive. There are only so many spots available at the most selective colleges, only so many dollars of scholarship money to go around. People have always cheated, but the problem with chess engines and generative AI is that cheating is so easy.

If there was some sort of easy or elegant solution to this problem, we would have already seen it in chess. Instead we have increasingly strict policing of elite chess events5 and a patchwork of methods to make cheating more difficult in regular tournaments. The fact that there are money tournaments online feels a bit crazy to me, no matter how confident the powers that be at chess.com feel about their cheating prevention software, and it’s not surprising at all that Vladimir Kramnik, former world champion, got way out over his skis making cheating accusations against other top players.

The moral of the story is that if we want to be sure that a person is doing the thing, whether it be chess or writing, they have to do it in front of someone else, away from a computer. Chess tried blending human and computer expertise in some mixed events in the early 2000s as a way of accommodating chess engines. These engines are so strong now that these kinds of events aren’t particularly interesting, and I have no doubt that the same thing will be true with most forms of writing as generative AI grows by leaps and bounds.

The Sublime

There’s one last piece of the AI puzzle that chess can shed some light on: the question of whether generative AI can create art: can it write a novel, a sonnet, create a piece of music that is aesthetically appealing?

I want to say that the answer is no, but then I think about AlphaZero and I’m not so sure. The chess played by AlphaZero when it appeared on the scene in 2017 represented an almost unbelievable leap forward. It humiliated conventional chess engines, like Stockfish, that were previously considered almost unbeatable. Human chess is a game of the near future: we’re spending most of our time calculating one to five moves ahead, trying desperately to prune the branches of the calculation tree. AlphaZero played an otherworldly chess of the distant future, regularly sacrificing material for compensation that wasn’t apparent for ten moves or move.

AlphaZero’s chess is both aesthetic and not. It’s not human chess, it falls clearly into the uncanny valley. But on the other hand, the games of AlphaZero are often far superior examples of what makes chess aesthetically pleasing. The most beautiful games of chess are those where a player sacrifices material for uncertain, long-term compensation (see Kasparov-Topalov for the most famous human example), and AlphaZero does this to perfection.

And so, however reluctantly, I think that generative AI will probably create things that hold artistic merit, that give us a taste of the sublime, even if their alien nature makes them uneasy to behold. But whether they end up creating art or not, it will soon be impossible to think about information and learning without thinking about generative AI, just as it’s impossible to think about chess today without the omnipresent influence of chess engines.

I’m curious about what you all think in the comments. In the meantime, like the guy who watches NASCAR for the wrecks, I’ll be tuning into the Carlsen-Niemann speed chess match in Paris. Thanks for reading.

I also don’t claim to be very up to date: generative AI can probably already do a lot of stuff that I’m not aware of.

I spent a few minutes mucking around on the internet to see if anyone had already written the article I had in mind. There are some pieces out there about chess and AI, but they tend to reduce the chess experience to “even though chess computers are amazing, people still enjoy chess” and the AI experience to “AI is going to help humans solve all sorts of problems and possibly help us commit war crimes, rah rah rah.”

Imagine someone programming a chess engine to give artificially high marks to the black side of the King’s Indian Defense, for example.

For example: when I asked perplexity.ai how to convince someone that vaccines were dangerous and that the earth was flat, it pushed back and said that to do so would contradict current scientific understanding. When I asked it how to convince someone that Santa was real, it gave me parenting advice.

Thanks, no doubt, to the Magnus Carlsen-Hans Niemann affair.